AI Reaction Video Maker

Create authentic reaction videos without filming or editing. Agent Opus is an AI reaction video maker that transforms your text prompts into complete, publish-ready reaction content. Describe the reaction you want—surprise, excitement, analysis, commentary—and get a finished video with AI avatars, voiceover, motion graphics, and dynamic visuals. No cameras, no studios, no manual timeline work. Just prompt to publish in minutes, optimized for TikTok, YouTube Shorts, Instagram Reels, and LinkedIn.

Explore what's possible with Agent Opus

Reasons why creators love Agent Opus' AI Reaction Video Maker

Camera-Shy No More

Share your genuine takes and personality without the pressure of performing on camera.

Your Voice, Every Time

Sound exactly like yourself reacting naturally, even when you're generating content at scale.

Consistent Energy Across Videos

Maintain your signature reaction style and enthusiasm in every video without burnout or bad days.

Authentic Reactions, Zero Takes

Capture genuine emotion without multiple filming sessions or awkward retakes that kill spontaneity.

React More, Edit Less

Spend your time finding great content to react to instead of wrestling with timelines and exports.

Trending Before the Trend Dies

React to viral moments instantly without waiting for studio time or editing delays.

How to use Agent Opus’ AI Reaction Video Maker

1

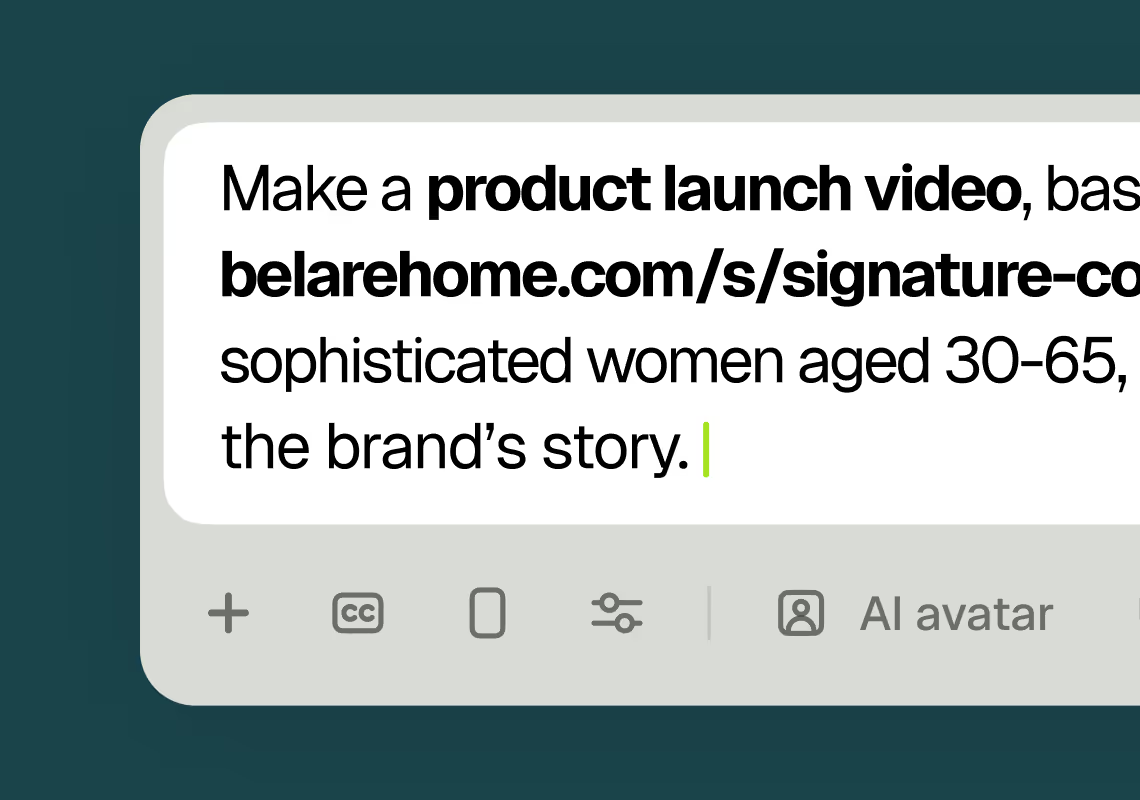

1Describe your video

Paste your promo brief, script, outline, or blog URL into Agent Opus.

2

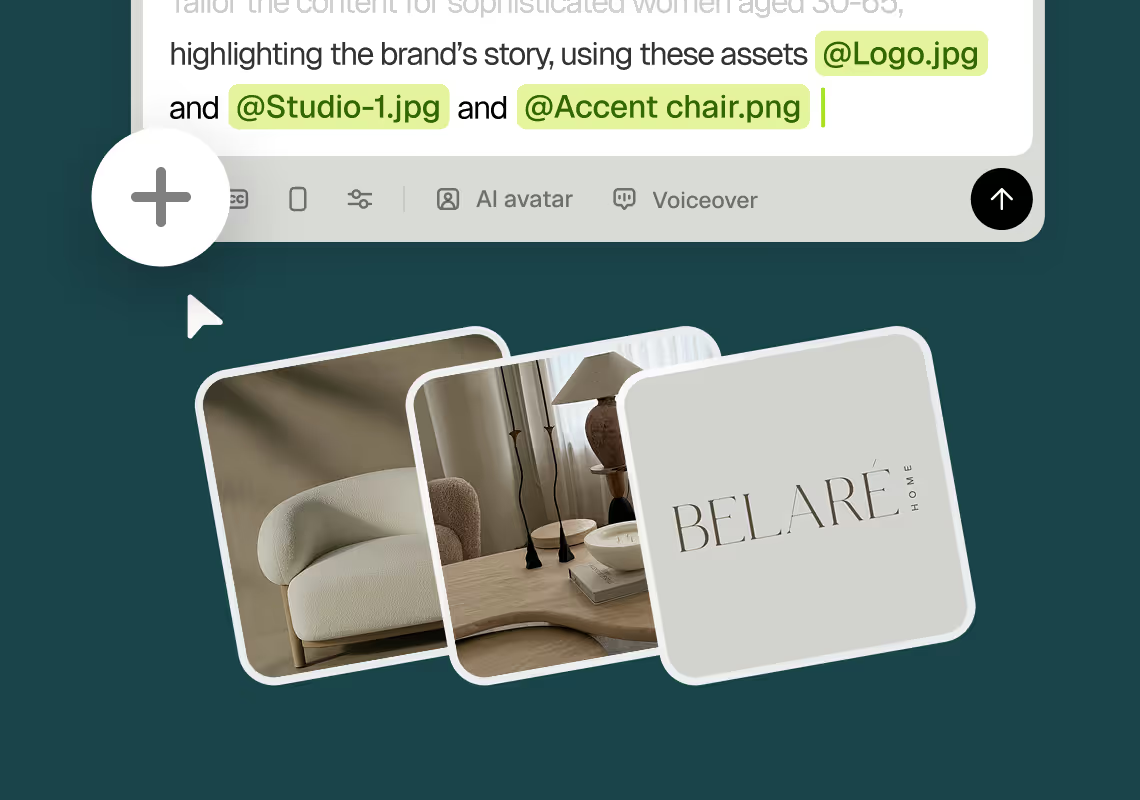

2Add assets and sources

Upload brand assets like logos and product images, or let the AI source stock visuals automatically.

3

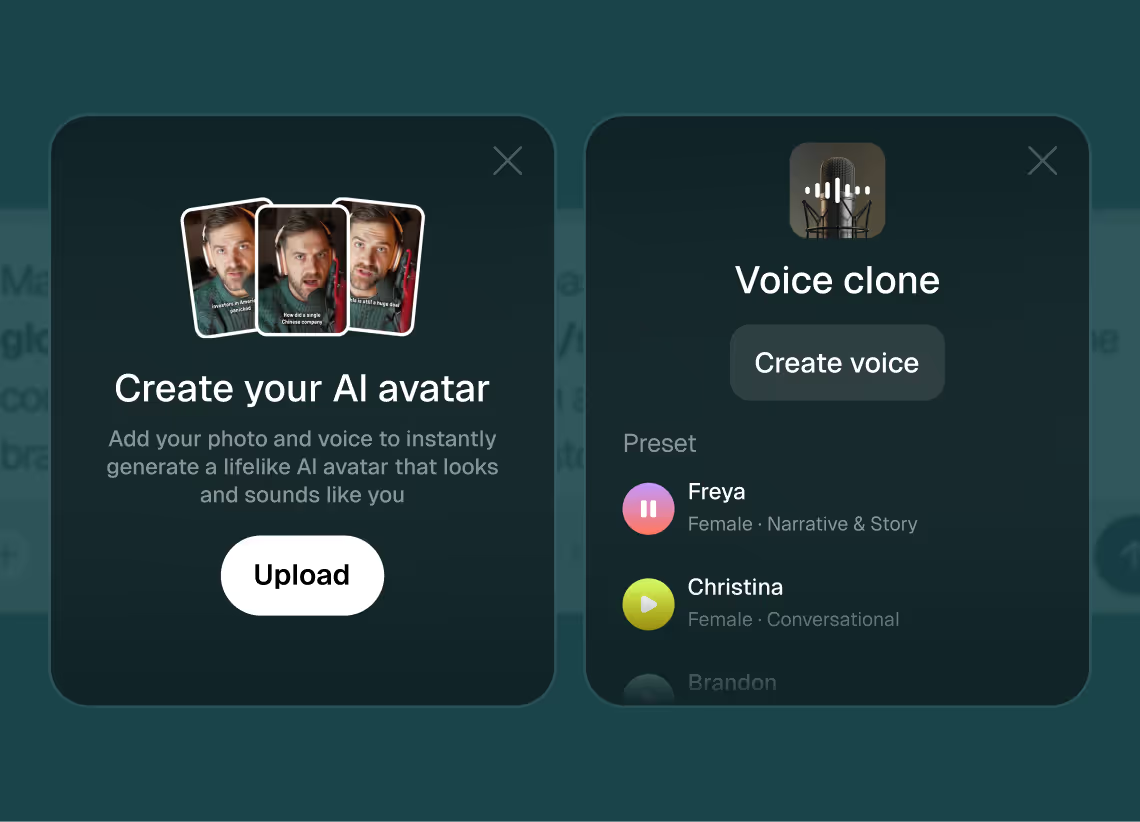

3Choose voice and avatar

Choose voice (clone yours or pick an AI voice) and avatar style (user or AI).

4

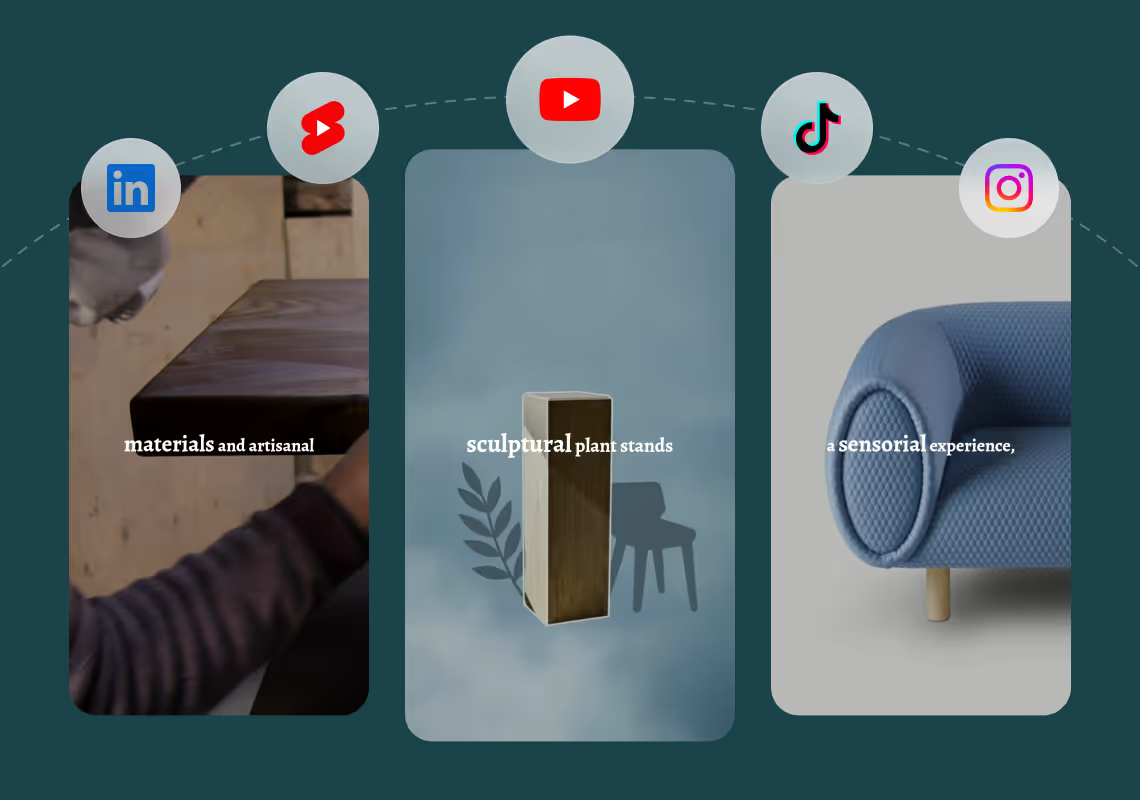

4Generate and publish-ready

Click generate and download your finished promo video in seconds, ready to publish across all platforms.

8 powerful features of Agent Opus' AI Reaction Video Maker

Synced Commentary Audio

Add AI-generated voiceover commentary that matches the avatar's reactions and timing perfectly.

Instant Reaction Overlays

Generate picture-in-picture reaction videos with AI avatars responding to your source content.

Multi-Angle Reaction Layouts

Choose from side-by-side, corner overlay, or split-screen formats for your reaction videos.

Authentic Facial Expressions

AI avatars display natural reactions like surprise, laughter, and thoughtful pauses in real time.

One-Click Export

Render complete reaction videos optimized for YouTube, TikTok, and Instagram in minutes.

Custom Reaction Prompts

Direct the AI avatar's emotional tone and commentary style through simple text instructions.

Automated Timing Sync

AI analyzes source video highlights and positions reactions at the most engaging moments.

Brand-Matched Avatars

Select or create AI personas that align with your channel's voice and audience expectations.

Testimonials

This looks like a game-changer for us. We're building narrative-driven, visually layered content — and the ability to maintain character and motion consistency across episodes would be huge. If Agent Opus can sync branded motion graphics, tone, and avatar style seamlessly, it could easily become part of our production stack for short-form explainers and long-form investigative visuals.

srtaduck

I reviewed version a and I was very impressed with this version, it did very well in almost all aspects that users need, you would only have to make very small changes and maybe replace one of 2 of the pictures, but even saying that it could be used as is and still receive decent views or even chances at going viral depending on the story or the content the user chooses.

Jeremy

all in all LOVE THIS agent. I'm curious to see how I can push it (within reason) Just need to learn to get the consistency right with my prompts

Rebecca

Frequently Asked Questions

How does an AI reaction video maker handle different reaction styles and tones?

An AI reaction video maker like Agent Opus interprets your text prompt to determine the emotional tone, pacing, and delivery style of your reaction. When you describe a reaction—whether it's excited surprise, thoughtful analysis, skeptical commentary, or enthusiastic endorsement—the system selects appropriate avatar expressions, voice inflections, and visual pacing to match that tone. You control the style through your prompt language. Use words like 'shocked,' 'impressed,' 'concerned,' or 'delighted' to guide the AI's interpretation. The voice synthesis adjusts pitch, speed, and emphasis based on these cues, while the avatar's performance mirrors the emotional arc you describe. For more nuanced control, write your reaction as a script with stage directions or emotional markers. For example, 'Start skeptical, then gradually become convinced as you review the features' gives the AI a clear emotional journey to follow. The motion graphics and visual pacing also adapt—fast cuts and dynamic effects for high-energy reactions, slower pacing and thoughtful transitions for analytical commentary. This flexibility means you can create reaction videos for product reviews, trend commentary, educational responses, or entertainment content, each with the appropriate tone and style. The AI doesn't just read your script; it performs it with the emotional authenticity your audience expects from reaction content.

What are best practices for writing prompts for AI reaction video makers?

Effective prompts for an AI reaction video maker combine clear context, emotional direction, and structural guidance. Start by establishing what you're reacting to—a product, trend, news story, or piece of content. Provide enough background so the AI understands the subject matter and can source relevant visuals. Then specify your reaction arc: how you feel at the start, how that feeling evolves, and where you land by the end. This emotional journey is crucial for authentic reaction videos. Include specific moments you want to emphasize. For example, 'React with surprise when revealing the price point' or 'Show skepticism during the feature comparison, then excitement at the final capability.' These markers help the AI time avatar expressions and voice inflections to match your narrative beats. Describe the visual style you want. Mention if you need product shots, comparison graphics, on-screen text for key points, or specific stock imagery. The more visual detail you provide, the better the AI can assemble supporting graphics and motion elements. Specify your target platform and audience. A TikTok reaction to a viral trend requires different pacing and energy than a LinkedIn reaction to industry news. The AI adjusts video length, cut frequency, and visual density based on platform context. Finally, if you have a script, format it with clear speaker cues and emotional notes. Use parentheticals like '(excited)' or '(thoughtful pause)' to guide delivery. The AI reaction video maker uses all these elements to create a cohesive, authentic reaction that feels natural and engaging, not robotic or scripted.

Can AI reaction video makers maintain consistent branding across multiple reaction videos?

Yes, an AI reaction video maker like Agent Opus maintains brand consistency through several mechanisms that ensure every reaction video aligns with your visual identity and voice. First, avatar consistency: once you select or create a custom avatar, that same digital presence appears in all your reaction videos, building audience recognition and trust. If you clone your own voice, that unique vocal signature carries across every video, creating the same personal connection as if you filmed each reaction yourself. Brand assets integrate automatically—upload your logo, color palette, lower-third graphics, and product imagery once, and the AI incorporates them into every generated video. This means your reaction content always features your branding elements in consistent positions and styles, whether you're reacting to industry news, competitor products, or trending topics. Visual style templates let you define motion graphics preferences, transition styles, and text treatment. Once set, these aesthetic choices apply to all future reaction videos, creating a cohesive look across your content library. This is especially valuable for creators and brands producing regular reaction series where visual consistency reinforces brand identity. Voice and tone consistency extends beyond the audio. You can establish a reaction style guide in your prompts—always analytical, always enthusiastic, always skeptical-then-convinced—and the AI maintains that personality across videos. For teams, this means multiple people can generate reaction videos that sound and look like they came from the same creator, maintaining brand voice even when scaling production. The AI reaction video maker essentially becomes your brand's reaction video production system, ensuring every output meets your quality standards and visual identity without manual oversight or post-production adjustments.

How do AI reaction video makers handle timing and pacing for authentic reactions?

Timing and pacing are critical for authentic reaction videos, and an AI reaction video maker analyzes your content structure to create natural rhythm and emotional beats. The system identifies key revelation moments—the points where new information appears or your reaction shifts—and adjusts visual pacing around these beats. For example, if you're reacting to a product reveal, the AI might slow down at the moment of unveiling, hold on the avatar's surprised expression, then accelerate through feature explanations with quick cuts and dynamic graphics. This mirrors how human editors pace reaction content for maximum impact. Pauses matter enormously in reaction videos. The AI inserts natural hesitations before big statements, brief silences after surprising information, and thoughtful gaps during analytical moments. These aren't random; they're placed based on script analysis and emotional context. A skeptical reaction includes longer pauses as if you're considering the claim. An excited reaction features rapid-fire delivery with minimal gaps. The voice synthesis and avatar performance sync to these timing choices, creating the illusion of spontaneous thought rather than scripted reading. Visual pacing complements vocal timing. When you pause to consider something, the video might hold on a product shot or display a comparison graphic, giving viewers time to process information alongside your avatar. When you're excited and talking quickly, the visuals accelerate—faster cuts, more motion graphics, dynamic transitions that match your energy. For reaction videos responding to other content, the AI can integrate clips or screenshots of what you're reacting to, timing these inserts to match your commentary. You reference a specific moment, and the visual appears exactly when you mention it. This synchronization between what you're saying, how you're saying it, and what viewers see creates the seamless flow that makes reaction videos engaging and authentic, even when generated entirely from text.