Chip Export Controls and AI Video Generation: Multi-Model Platforms Win

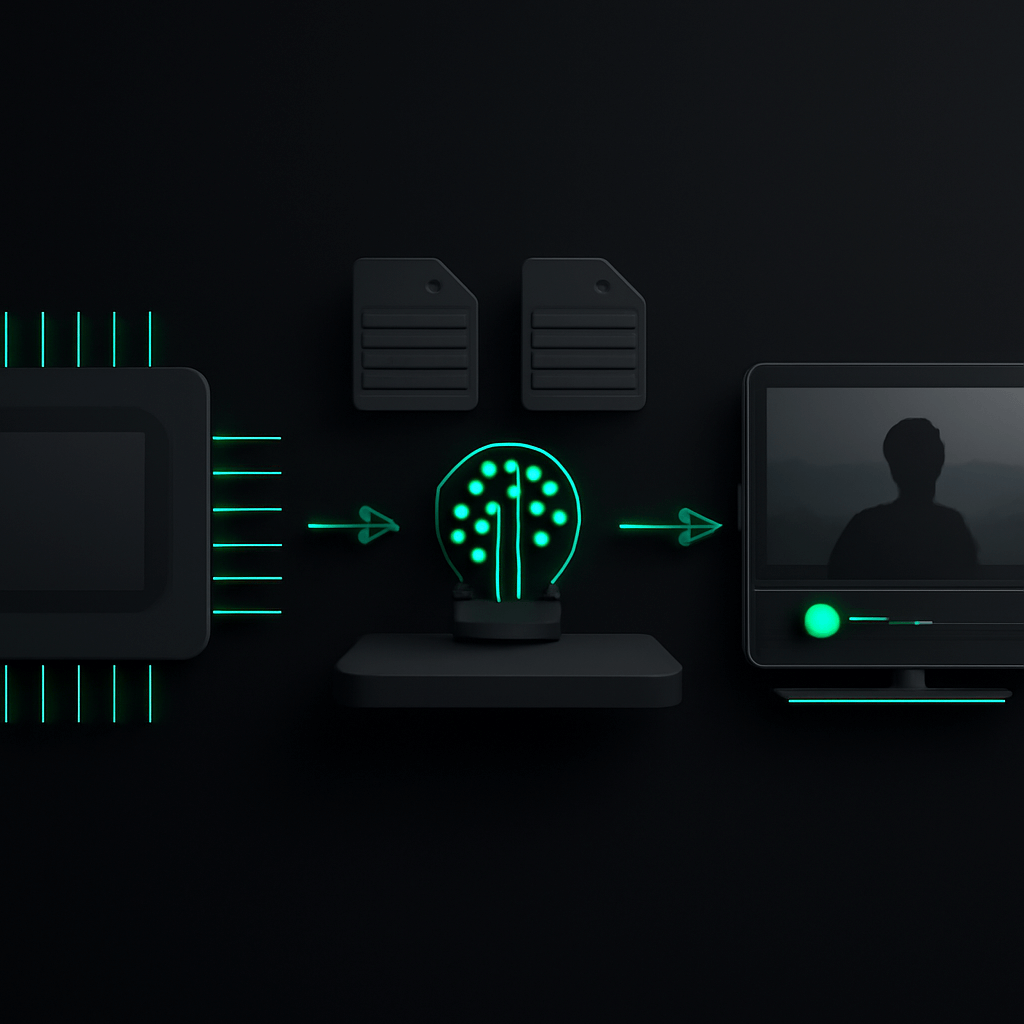

Chip Export Controls and AI Video Generation: Why Multi-Model Platforms Matter More Than Ever

The U.S. government is reportedly drafting sweeping new chip export controls that could reshape the entire AI landscape. According to recent reports, the proposed regulations would give federal authorities oversight of every chip export sale, regardless of origin country. For creators who rely on AI video generation tools, this development signals a fundamental shift in how we should think about platform selection and workflow resilience.

Chip export controls directly affect the computational infrastructure that powers every major AI video model. When access to advanced semiconductors becomes restricted or unpredictable, the companies building these models face development slowdowns, capacity constraints, and potential service disruptions. For video creators, the solution is clear: diversify your AI toolkit before you need to.

What the New Chip Export Controls Actually Mean

The proposed regulations represent a dramatic expansion of U.S. semiconductor policy. Unlike previous targeted restrictions on specific countries, this framework would establish federal involvement in chip exports globally. The implications ripple through the entire AI supply chain.

Key Elements of the Proposed Controls

- Universal oversight: Every chip export sale would require some level of government review, regardless of destination

- Expanded scope: Controls would apply to chips manufactured anywhere, not just those produced domestically

- Supply chain tracking: New requirements for documenting end-use and preventing diversion

- Licensing complexity: Additional bureaucratic layers that could slow procurement timelines

These changes matter because AI video generation models require massive computational resources. Training a single state-of-the-art video model can consume thousands of high-end GPUs running for months. When chip availability becomes uncertain, model development timelines stretch and operational costs climb.

How Chip Restrictions Impact AI Video Model Development

The connection between semiconductor policy and your video creation workflow might seem abstract, but the effects are concrete and measurable. Understanding this relationship helps explain why platform architecture decisions matter so much right now.

Training Infrastructure Challenges

Every AI video model you use today was trained on clusters of specialized chips. Companies like those behind Kling, Hailuo MiniMax, Runway, and others depend on continuous access to cutting-edge hardware. Export controls create several problems:

- Delayed model updates: When companies cannot expand their training infrastructure, new model versions take longer to develop

- Regional availability gaps: Models trained in regions with restricted chip access may lag behind competitors

- Capacity constraints: Inference (running the models for users) also requires chips, meaning service availability could fluctuate

The Single-Provider Risk

If you rely on just one AI video generation tool, you are exposed to whatever challenges that provider faces. A chip shortage affecting their primary model means your production pipeline stops. A geopolitical development restricting their hardware access means your deadlines slip.

This is not hypothetical. We have already seen AI services experience capacity constraints, waitlists, and feature rollbacks when infrastructure challenges emerge. The new export control framework increases the probability of such disruptions.

Why Multi-Model Platforms Provide Essential Protection

The strategic response to supply chain uncertainty is diversification. In AI video generation, that means using platforms that aggregate multiple models rather than betting everything on a single provider.

The Aggregation Advantage

Agent Opus operates as a multi-model AI video generation platform, combining capabilities from Kling, Hailuo MiniMax, Veo, Runway, Sora, Seedance, Luma, Pika, and other leading models. This architecture provides natural hedging against single-provider disruptions.

When you submit a video project to Agent Opus, the platform automatically selects the optimal model for each scene based on your requirements. If one model experiences capacity issues or temporary unavailability, the system routes to alternatives without requiring any action from you.

Practical Benefits Beyond Risk Mitigation

The multi-model approach delivers advantages even when supply chains function normally:

- Best-in-class results: Different models excel at different content types. Agent Opus matches each scene to the model that handles it best.

- Longer-form content: By stitching clips from multiple models, Agent Opus creates videos exceeding three minutes, something single-model tools struggle with.

- Continuous improvement: As new models emerge, the platform integrates them automatically. Your capabilities expand without switching tools.

Building a Resilient AI Video Workflow

Adapting to regulatory uncertainty requires proactive planning. Here is how to structure your video production approach for maximum resilience.

Step 1: Audit Your Current Dependencies

List every AI tool in your video workflow. For each one, identify the underlying model provider and their likely chip supply chain exposure. Tools built on a single proprietary model carry more risk than aggregators.

Step 2: Establish Multi-Model Access

Ensure you have accounts and familiarity with platforms that offer model diversity. Agent Opus provides access to the major AI video models through a single interface, simplifying this step considerably.

Step 3: Test Alternative Pathways

Before a crisis hits, run test projects through different model combinations. Understand how your content performs across various AI systems so you can adapt quickly if your primary option becomes unavailable.

Step 4: Standardize Your Inputs

Agent Opus accepts multiple input formats: prompts, scripts, outlines, or blog article URLs. Standardizing how you prepare project briefs means you can route work to any available model without reformatting.

Step 5: Build Buffer Time Into Deadlines

During periods of supply chain uncertainty, build extra time into production schedules. Multi-model platforms reduce but do not eliminate the possibility of delays.

Step 6: Monitor Industry Developments

Stay informed about chip policy changes and their effects on AI providers. Early awareness gives you time to adjust workflows before disruptions impact your projects.

Common Mistakes When Navigating AI Supply Chain Risks

Avoid these pitfalls as you adapt your video production strategy:

- Assuming stability: The AI landscape is changing rapidly. What works today may face constraints tomorrow. Plan accordingly.

- Ignoring geographic factors: Models developed in different regions face different regulatory exposures. Diversify across geographies, not just providers.

- Waiting for problems: By the time a supply chain issue affects your workflow, alternatives may be overloaded with other displaced users. Establish backup options now.

- Overcomplicating workflows: You do not need accounts with every AI video tool. A single multi-model platform like Agent Opus provides diversification without complexity.

- Neglecting input flexibility: If your workflow depends on a specific input format that only one tool accepts, you are creating unnecessary lock-in.

What Agent Opus Offers in an Uncertain Landscape

Agent Opus was designed for exactly this kind of environment. The platform combines multiple leading AI video models into a unified interface that automatically optimizes for quality and availability.

Core Capabilities

- Multi-model scene assembly: Each scene in your video can use a different underlying model, selected automatically for best results

- Flexible inputs: Start from a prompt, detailed script, outline, or even a blog URL

- AI motion graphics: Enhance videos with generated visual elements

- Voiceover options: Use AI voices or clone your own voice for narration

- Avatar integration: Include AI-generated or user-provided avatars

- Royalty-free assets: Automatic sourcing of images and background soundtracks

- Social-ready outputs: Export in aspect ratios optimized for different platforms

The result is prompt-to-publish-ready video without the fragility of single-provider dependence.

Key Takeaways

- New chip export controls could disrupt AI video model development and availability across the industry

- Single-model tools expose creators to concentrated supply chain risk

- Multi-model platforms like Agent Opus distribute risk across multiple providers and geographies

- Automatic model selection ensures optimal results while providing built-in fallback options

- Proactive workflow planning now prevents production disruptions later

- The regulatory environment will likely become more complex, making platform architecture increasingly important

Frequently Asked Questions

How do chip export controls affect AI video generation tools specifically?

Chip export controls impact AI video generation by constraining the hardware available for both training new models and running inference for users. Video generation requires significantly more computational power than text or image AI, making these tools particularly sensitive to chip availability. When providers cannot access sufficient GPUs, they may slow feature development, implement usage caps, or experience service degradation. Multi-model platforms like Agent Opus mitigate this by routing work across multiple providers with different supply chain exposures.

Can Agent Opus automatically switch models if one becomes unavailable due to supply issues?

Yes, Agent Opus is designed to handle model availability fluctuations automatically. The platform evaluates multiple factors when selecting which model to use for each scene, including current availability and capacity. If a particular model experiences constraints, Agent Opus routes to alternatives without requiring user intervention. This happens transparently during video generation, ensuring your projects complete even when individual providers face challenges. The multi-model architecture makes this seamless switching possible.

Which AI video models does Agent Opus currently integrate for supply chain diversification?

Agent Opus integrates leading AI video models including Kling, Hailuo MiniMax, Veo, Runway, Sora, Seedance, Luma, and Pika. This selection spans providers with different geographic bases, funding sources, and infrastructure arrangements, providing meaningful diversification against supply chain disruptions. The platform continuously evaluates and adds new models as they become available, ensuring users always have access to the latest capabilities while maintaining resilience through provider diversity.

How should video creators prepare their workflows for potential AI model disruptions?

Video creators should take several preparatory steps: First, audit current tool dependencies to understand single-provider exposure. Second, establish access to multi-model platforms like Agent Opus before disruptions occur. Third, standardize input formats (scripts, outlines, prompts) that work across multiple systems. Fourth, test alternative model combinations on non-urgent projects to understand quality differences. Finally, build buffer time into production schedules during periods of regulatory uncertainty. These steps ensure workflow continuity regardless of which specific models face constraints.

Will chip export controls make AI video generation more expensive for creators?

Chip export controls could increase costs across the AI video generation industry by raising infrastructure expenses for model providers. However, multi-model platforms offer some cost protection through competitive routing. Agent Opus can direct work to models offering the best value at any given time, rather than being locked into a single provider's pricing. Additionally, aggregator platforms often negotiate volume arrangements across multiple providers, potentially offering better rates than individual tool subscriptions during supply-constrained periods.

How does geographic diversification of AI models protect against regulatory risks?

Geographic diversification matters because chip export controls and AI regulations vary by jurisdiction. A model developed and hosted in one region may face different constraints than one based elsewhere. Agent Opus integrates models from providers across multiple countries and regulatory environments. When restrictions affect one region's chip supply or AI development, models from other regions remain available. This geographic spread within a single platform provides protection that using multiple single-region tools separately cannot match as effectively.

What to Do Next

The chip export control landscape will continue evolving throughout 2026 and beyond. Creators who establish resilient, multi-model workflows now will maintain production continuity while others scramble to adapt. Agent Opus provides the diversified AI video generation infrastructure you need to weather supply chain uncertainty while accessing the best capabilities the industry offers. Explore what multi-model video generation can do for your projects at opus.pro/agent.