Transcribe Video with Speaker Names via the OpusClip API

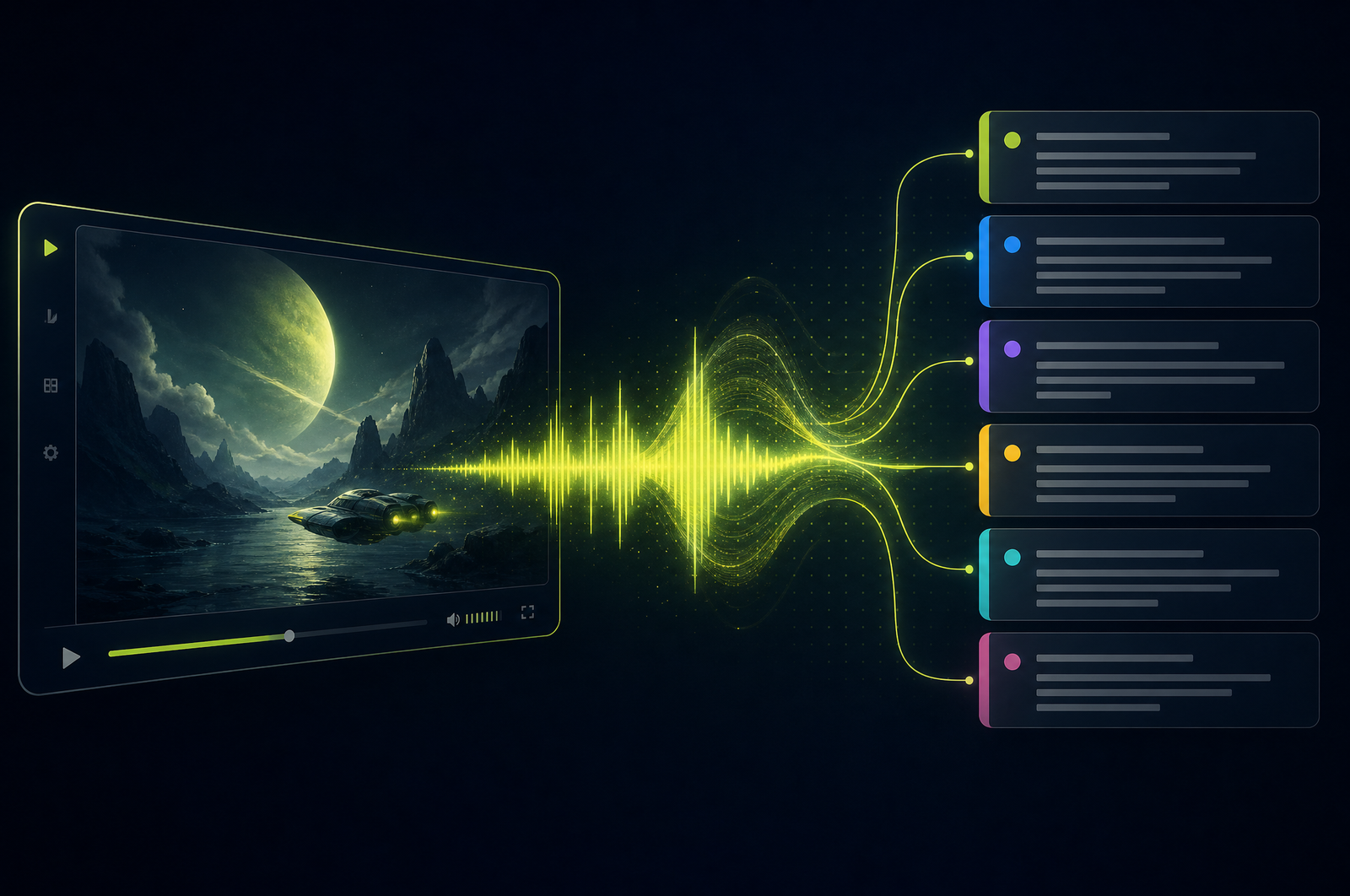

A transcript is the foundation of every other video workflow — captions, summaries, chapters, search, content repurposing. Most APIs treat transcription as a generic speech-to-text problem and leave you to wire in speaker diarization, punctuation cleanup, and timestamp alignment separately. A modern video transcription API integrates these into one call: word-level timestamps, speaker attribution, clean punctuation, multi-language support.

This guide is a developer-focused look at how video transcription APIs work and how the OpusClip API will support transcription as a first-class endpoint when it goes generally available.

The OpusClip API is currently in early access — request access at opus.pro/api. Code examples will publish here once the v1 spec is finalized.

Key takeaways

• Production transcription APIs publish 92-97% word-level accuracy on clear English audio.

• Speaker diarization should be integrated, not bolt-on — pre/post integration introduces alignment errors.

• Output formats vary: JSON (programmatic use), SRT/VTT (captioning), paragraph-format (show notes), plain text (LLM input).

• Vocabulary hints (proper nouns, technical jargon, product names) significantly improve accuracy on domain-specific content.

• The OpusClip API will support transcription as a standalone endpoint and as a building block for captions, clips, summaries, and chapters.

Why integrated transcription beats stitched-together pipelines

Most teams that need transcripts end up chaining tools:

• Whisper for speech-to-text

• pyannote or a separate diarization tool for speaker labels

• A custom alignment step to merge them

• A formatter to produce SRT/VTT

Each step introduces errors. Speaker labels drift from transcript timing. Alignment math produces off-by-some-seconds bugs. Formatter idiosyncrasies break downstream parsers.

An integrated transcription API runs all of these in one model pass with shared internal alignment. The output is cleaner because the diarization and timing share the same internal representation.

What a video transcription API does

Three stages, ideally fused:

1. Speech-to-text. Audio extracted from the video runs through a transcription model. The best models handle 30+ languages, strong accents, and modest background noise.

2. Timing alignment. Each word maps to a start/end timestamp. Frame-accurate alignment matters for captioning workflows.

3. Diarization and formatting. Speaker labels assigned to each segment; punctuation and capitalization added; output rendered in the requested format.

The integration is the differentiator. Standalone transcription tools produce transcripts; integrated APIs produce structured, speaker-attributed, timing-aligned content.

What to consider when integrating

Format support. JSON, SRT, VTT, paragraph-format, plain text — request the formats you actually need. Don't post-process if the API can output them natively.

Language coverage. Major languages (English, Spanish, Portuguese, French, German) are excellent across most APIs. Lower-resource languages (Bengali, Vietnamese, Swahili) vary widely. Sample your target languages.

Vocabulary hints. Domain-specific content (technical jargon, product names, proper nouns) needs vocabulary hints. Most APIs let you pass a list of terms to bias the model toward.

Filler word handling. Some workflows want verbatim transcripts (legal, medical); others want cleaned ("um", "uh" removed). The API should expose this as a config.

Word-level vs. segment-level confidence. Word-level confidence is useful for surfacing low-confidence segments for human review. Most production APIs return both.

Profanity filtering. Configurable masking is important for some use cases (broadcast, children's content).

Long-form support. Confirm the API handles multi-hour content in one job. For very long content, prefer webhook delivery over polling.

Common use cases by team type

• Podcasters. Episode transcripts for show notes, blog content, and SEO landing pages.

• Course platforms. SRT files for every lesson to satisfy accessibility and improve searchability.

• Sales operations. Searchable archives of recorded customer calls.

• Legal and HR. Verified transcripts of interviews and depositions.

• Media and publishing. Long-form interview transcripts as the basis for articles.

Common pitfalls

• Stitching transcription and diarization manually. Alignment errors are inevitable. Use integrated APIs that handle both in one pass.

• Ignoring vocabulary hints. Default models guess at proper nouns. A 30-second config of expected terms can lift accuracy from 90% to 95%+ on domain content.

• Forgetting confidence scoring. Without flagging low-confidence segments for review, you'll publish errors. Always check word-level confidence.

• Auto-trusting transcripts for high-stakes content. Modern transcription is ~95% accurate, which means 1 in 20 words wrong. For legal, medical, or PR-sensitive content, human review is still required.

• Format mismatch downstream. Your captioning tool expects WebVTT; your show notes tool wants paragraph-format. Request both upfront from one transcription pass.

How the OpusClip transcription will work

The OpusClip API is currently in early access. The transcription workflow is built around:

• Word-level timestamps with confidence scoring

• Integrated speaker diarization (anonymous or named)

• Output formats: JSON, SRT, VTT, paragraph-format, plain text, custom delimited

• Vocabulary hints and profanity filtering as standard options

• 30+ language support with language auto-detection

• Long-form support (up to 4 hours per source)

Full code examples and parameter reference will publish to the developer docs when the v1 spec is finalized. To get notified or apply for early access, visit opus.pro/api.

FAQ

How does this compare to AssemblyAI, Deepgram, or Whisper?

Word-accuracy is comparable across modern production APIs. Integration is the differentiator — OpusClip's transcription is designed to feed directly into captions, clips, summaries, and chapters from one source pass.

Can I get word-level timestamps without the full JSON?

Yes — most APIs offer lightweight formats like CSV (word, start_sec, end_sec, speaker, confidence) for programmatic use without large JSON parsing.

Does it support technical jargon and proper nouns?

Yes — vocabulary hints let you boost the likelihood of specific terms. Useful for domain content with product names, technical concepts, or non-English proper nouns.

Can I transcribe just audio (no video)?

Yes — production APIs accept MP3, WAV, M4A, FLAC URLs alongside video formats. The same endpoint handles both.

How does the OpusClip API handle long-form video?

The transcription endpoint supports up to 4 hours per source in a single job. For longer content, chunk into segments and run parallel jobs. Webhook delivery is preferred for long jobs over polling.

Next steps

For speaker-attribution detail, see Speaker Diarization for Multi-Speaker Video. For captions on top of transcripts, see How to Add Captions to a Video. For pull quotes, see Extract Pull Quotes from Video.